Towards Workers’ Data Collectives

Christina Colclough

The commodification of workers as a consequence of increased digital monitoring and surveillance is well underway. Through advanced predictive analytics, work and workers across the world are becoming datafied to the detriment of fundamental, human, and workers’ rights. This essay argues that trade unions must expand their services to include collective control over workers’ and work data through the formation of what I term Workers’ Data Collectives. However, to do so, unions urgently need to address regulatory gaps and negotiate for much improved workers’ data rights in companies and organizations. Without these two goals for the collectivization of data and an alternative digital ethos backed by new regulatory institutions, I argue, union and worker power will be significantly diminished leading to irreparable power asymmetries in the world of work.

Introduction: The asymmetric datafication of society and work

Currently, the United States and China together account for 90 percent of the market capitalization of the world’s 70 largest digital platforms.1 These platforms, in turn, are superpowers dominating markets and societies. Microsoft, followed by Apple, Amazon, Google, Facebook, Tencent, and Alibaba account for two-thirds of the world’s total market value. At the same time, roughly 50 percent of the world’s population still do not have access to the internet.2 The majority of this population is in the developing world. The companies mentioned above – collectively referred to as Big Tech – are utilizing the lack of public programs to expand internet coverage in these areas by offering platform-controlled internet access.3 These programs have been criticized for data leaching and de facto internet control, squeezing out of local businesses, exertion of censorship, and impeding on the freedom of individuals.45 It is not for no reason that many, myself included, speak of a de facto digital colonialism. But data colonialism isn’t just a tech company endeavor. Chinese companies have exported artificial intelligence surveillance technologies to more than 60 countries including Iran, Myanmar, Venezuela, Zimbabwe, and others with dismal human rights records. Reports also claim that Chinese companies supply AI surveillance technology to 63 countries, 36 of which have signed up for China’s politically-led Belt and Road Initiative.6

Data is being extracted from the actions and non actions of citizens across the world at a never-ending rate. Your smartphone’s 14 sensors track your internet activity, your use of e-services, your credit card payment information, your shopping habits, what apps you use and when, what you do, where you go, how you go there, your surroundings, and much more. All of this data is used to profile you and make predictions about you. These extraction systems remain obscure, hidden under the hood, while engaging in constant surveillance and making predictions about what you will or should do, what information should or shouldn’t be made available to you. But it’s not only about you. The probability analyses and inferences affect people like you – those alive now and those not yet born.

Now, think of all that data (picture it as a constant information flow you are knowingly or unknowingly giving away about yourself), and ask what influence it can or might have on your work and career? It is not difficult to put a profile together on the type of worker you are: investable or less so?

Job announcements are made almost exclusively online now. Will an algorithm predetermine your suitability and accordingly hide from or show you an available job? Probably. Research7 has shown that even when employers try to reach all audiences with a potential ad, the audience is mediated by – to use one example – Facebook’s algorithm. It is often the algorithm, rather than the employer, that decides whether you are a likely candidate and if the job announcement should be made available to you. Isn’t this an infringement on our liberty to form and shape our own life? What kind of power are we allowing these companies to wield in the process?

This may sound like science fiction or an episode out of ‘Black Mirror’ but it isn’t. At work, our companies are also becoming data miners and creators. Applicant Tracking Systems, that is, software used by companies to assist with human resources, recruitment, and hiring are estimated to be used by 98 percent of Fortune 500 companies.8 Candidates can be screened, sourced, assessed, interviewed, and vetted by artificial intelligence systems.9 On the job, you can be subject to algorithmic systems that measure your productivity or efficiency, schedule your workday, monitor the breaks you take, and those you don’t. Algorithms can plan the exact route you should take on the warehouse floor, or on the road between clients.

The increasing number of workers working remotely in the aftermath of the Covid-19 pandemic, has only increased corporate demand for surveillance and monitoring software.10 Some of these tools enable stealth monitoring, automated and periodic screenshot taking, video feeds, audio recording, keyboard tracking, optical character recognition, keystroke recording, or location tracking. Naturally, this often deep and intrusive surveillance11 raises serious concerns about workers’ privacy. It also begs that we ask: What is happening with the data that is being mined? Who has access to it? What is it used for? Should this data be mined in the first place? What about workers’ rights to be who they are and safeguard their privacy rights? How do we ensure that our workplaces are diverse and inclusionary? Are algorithmic systems in compliance with human rights and anti-discrimination laws? Are companies selling datasets to the multi-billion dollar sector of predictive analyses that include personal information about their workers? We must ask these questions and improve our collective agreements so that they protect our human rights, our freedom of speech, freedom of thought, of assembly, and of being human. Through collective agreements, we can create an alternative digital ethos free from surveillance capitalism and predictive analyses.

A good place to start is right here, right now, by asking what rights do workers have over data? It is a question not sufficiently raised and discussed. But the value or importance of workers’ data is undeniable. In European Parliament amendments of the now adopted General Data Protection Regulation (GDPR), there were far more substantive articles on workers’ data protection. In California, an amendment to the data protection law – the California Consumer Privacy Act (CCPA) – to exempt workers’ data from its scope was met partially through an exemption that lasts until 2021.1213 In other countries, employees are either directly excluded from data protection regulations (Australia, Thailand) or employers require employees’ informed consent to process data. However, as the GDPR clearly states, given the power asymmetry between workers and companies, informed consent should not be regarded as a legal basis for processing employee data.1415 In other words, if your employment depends, directly or indirectly, on providing consent to data processing, you have little choice but to comply.

In the above, we have established that workers’ data is gathered and generated by companies, and that these data can be used in corporate decision-making, and transferred, sold, or used by third parties. We have also discussed that these data can directly influence your work and career prospects, and affect workers like you. Yet, as a worker, you have few, if any, rights in relation to these data and how they are used. The power asymmetry is thus growing between you and the companies which seem to know or infer information about you that can directly affect your life. For workers to maintain any control over their working lives, this power divide needs to be bridged. But we need to go further and ask: what if workers themselves controlled workplace data, drew insights from them, and used them to campaign for better working conditions, inclusive and diverse labor markets, fundamental rights, and new laws? The following sections will explore how we could make this happen.

Step 1: Establishing workers’ (data) rights

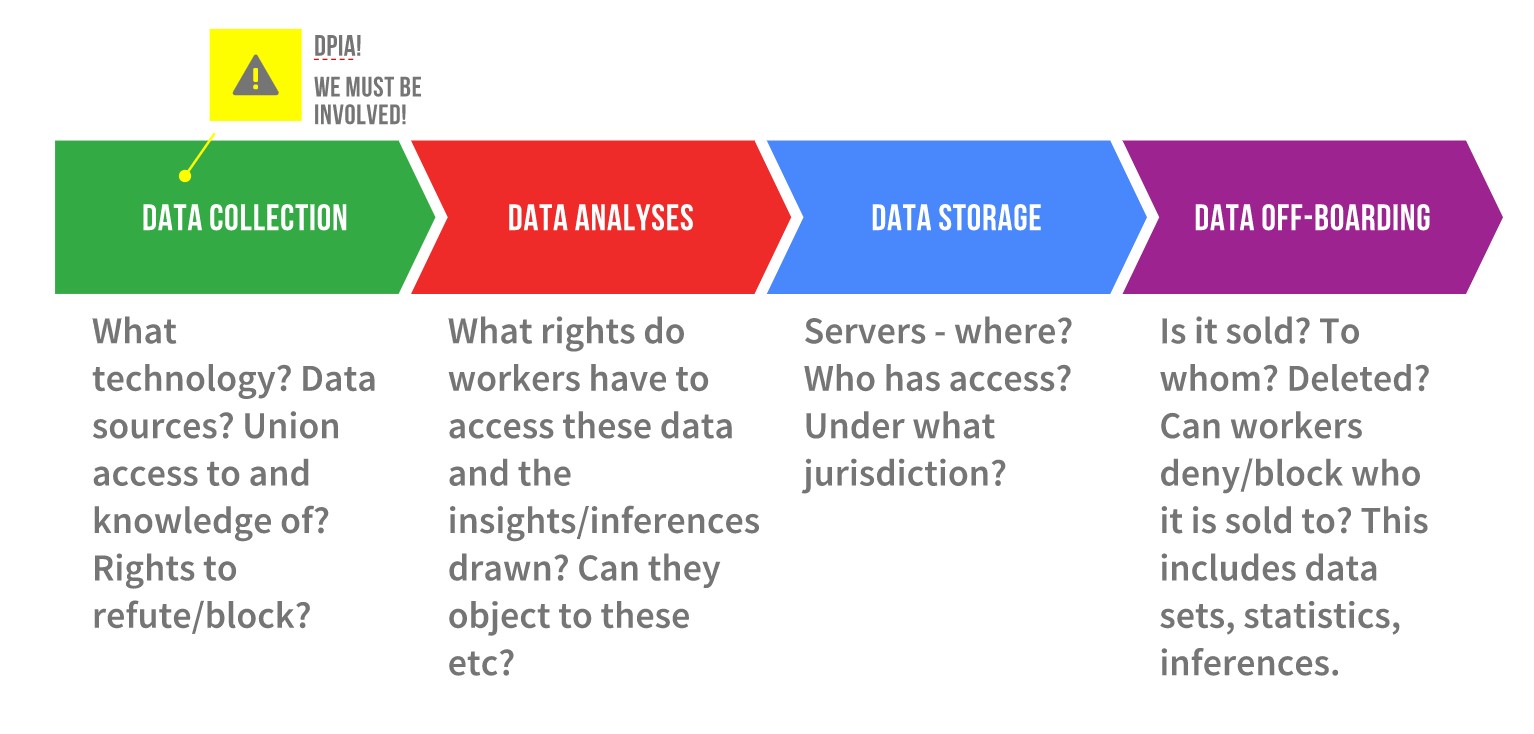

To date, very few collective agreements include specific articles on workers’ data rights. So we need to first ask, what should these data rights cover? The figure below depicts what I call the data life cycle at work and provides the grounds on which unions should intervene to improve workers’ rights.

Figure 1: the Data Life Cycle at Work, by Christina Colclough

The data collection phase covers both internal and external data collection tools, sources of the data, whether shop stewards and employees have been informed about the intended tools, and whether they have the right to refute or block (parts of) said tools and their data sources. In addition, unions should demand that the shop stewards, on behalf of a worker or group of workers, gain access to the data/datasets and inferences that include employee data or personally identifiable information.

In the data analyses phase, unions must cover the gaps in current regulation and ensure rights with regards to the inferences – profiles, statistical probabilities, etc. – made using algorithmic systems and datasets. Workers should have greater insight into, access to, and rights to rectify, block or even delete inferences made about them. Since these inferences can be used to determine work scheduling and wages (if linked to performance metrics), or can leveraged by human resources to decide who to hire, promote, or fire, access to them is key to the empowerment of workers.

Data storage might at first glance seem boring, but it is actually really important, and will become even more so if current e-commerce negotiations within and on the fringes of the World Trade Organization (WTO) are actualized.16 These discussions go far beyond facilitating the buying and selling of online goods and services and propose the following: a) prohibiting data localization, which means that data, by law, cannot be required to be stored under the jurisdiction of the home country; b) establishing corporate right to transfer data across borders and store such information wherever they want, including in data havens; c) banning governments from demanding disclosure of source codes and algorithms, even in cases where it may be necessary for security reasons. (For a full list of proposals, see page 17-18 of this report by Deborah James.)

To put it simply, these proposals say that data must be allowed to be moved across borders to what, we can expect, will be areas which have the least privacy protection. They will then be used, sold, rebundled, and sold again in whatever way corporations see fit. The recent European Court of Justice ruling 17 which invalidates the EU-US Privacy Shield can be seen as a slap in the face of proponents of unrestricted flow of data, but the demand is nonetheless still on the table.

Finally, the data off-boarding phase is also one where workers and unions must be vigilant. Off-boarding refers to the deletion of data, but also the selling or passing on of data and inferences/profiles/datasets to third parties. Unions should negotiate for much better rights with regards to: a) knowing what data/datasets/inferences are off-boarded, b) who they are off-boarded to, and c) objecting to the off-boarding to third party(ies) and even blocking it. The need to negotiate these rights acquire great urgency in light of the e-commerce negotiations within the WTO (but also in other plurilateral trade negotiations). As Shoshana Zuboff asserted in her speech at Rightscon 2020:

…human futures markets [predictive analytics] need to be criminalized, they need to be made illegal. They cannot stand. Human futures markets have predictably anti-democratic consequences. Those consequences are already clear. The economic imperatives of surveillance capitalism are a direct result of the financial incentives in those markets.” (my insert)

With successful data life cycle negotiations we will move towards collective rights in a datafied world. If workers have rights to these data, they will also have the right to decide what to do with them, for instance, share or pool them towards beneficial ends. It is to this we now turn.

Step 2: Forming the workers’ data collective

Before I jump into describing my data collective dream, let’s dwell a bit on the state of play in various forms of data collectives. There is a growing body of literature on data trusts, data commons, open data, and, more broadly, data stewardship. A commonality between these ideas is that they seek to empower the majority, and not just a few, through access to and (partial) control over aggregates of data. Another commonality is that the legal structures and institutions to empower groups of individuals through the collectivization of data are currently not really in place.18 The idea of an empowering structure is really still that – an idea.

There are existing examples of open data. Most are provided by private companies (for example, Facebook’s Data for Good, Google’s BigQuery which hosts a range of public and private datasets, Sage Bionetworks’ open data for the advancement of human health), some by international organizations (like the United Nations), and others are publicly-funded research projects (such as DECODE).

Whereas open data can be beneficial for groups, they are not explicitly constructed to be. Data trusts, however, for the most part, are constructed to be beneficial for the collective, as Sean McDonald, one of the world’s leading experts on data trusts, notes in this article, and as Sylvie Delacroix and Neil D. Lawrence advocate in much of their work.19

We have, in sum, a growing desire to make data beneficial to the collective, but not necessarily clearly-defined laws and institutions to facilitate this. So let me remain in the sphere of ideas and ideology and define the purpose and function of what I see could be an interesting and empowering data collective for workers.

What’s in the workers’ data collective?

A successful negotiation of the data life cycle in favor of stronger data rights will put all workers in a position to make decisions on data that are personal and/or personally identifiable. For example, your work data including education, skills, age, gender identification, wages, job responsibilities, contract, location during the work day, speed of work, time spent at work, length of commute etc., should be for you to pool into a collective structure that is aimed at representing your best interests. The cooperative Driver’s Seat is an example par excellence of pooling said data.

In addition, you could add to the collective, at least in principle and until they are banned, the inferences your company (private or public) has made on you – are you identified as a productive worker and on what grounds.

The app WeClock that was developed in the lab I was leading, adds a further source of work-related data that you could pool into the collective. This app gives you plenty of insights into your daily work conditions. How much time do you spend on your feet? Do you get any breaks? How much work is creeping into your private life as you send emails or answer company Slack messages after hours, and much more.

Now imagine that many of your colleagues did the same and pooled their data into the collective. The Workers’ Data Collective would then be in possession of lots of work-related data. New data as they come in, and old data as they get supplemented by the new.

Governing the collective

Data in itself is not useful. It needs to be structured. The purpose of this structuring, that is, what it is structured for, will be determined by the statutes of the Workers’ Data Collective. Let’s imagine the collective has a policy to combat wage theft – a multi-billion-dollar20 crime against workers. Data could be structured to find patterns in worked hours relative to paid hours. Or, the collective could be mandated to track and combat discrimination. The purposes can change over time, just as resolutions are voted for in democratic organizations. The Governing Board, known in data trust language as the trustees or settlers, will be voted in. It will be responsible for taking decisions about the collective’s data in the best interest of those who have submitted their data to the collective. The Governing Board members could be selected among data holders, but do not have to be. They could be a third party – a team of data collective governance experts.

The overall aim of the collective could, for example, be to ensure Rewarding Work for all workers. The statutes will determine how this should be done and through which policies; whether datasets can be sold for the benefit of the collective, and/or whether external access to the aggregated or even raw data can be granted, and if so, under what conditions. The statutes will stipulate the decision-making structure and the roles of the collective’s staff – those tasked to structure and analyze the data and those with legal, communications, administrative, and engagement skills. It is here that workers and their unions could put into action the principles of data minimization, ethical data handling, human rights before profit. The data collective must also have strict data governance policies and practices in place to protect the integrity and rights of those who have donated their data to the collective. This is necessary not least in order to prevent a third party from obtaining data from the collective without its direct agreement. The trust will need elaborate cyber security systems to ensure ethical and fair sharing of data and data security for all participants. It will also need to document all of the above.

With this framework in place, a Workers’ Data Collective will have a well-defined aim, purpose, and governance structure. These details should be made clear to workers who are considering putting their data into the collective, and so should the redlines for what the collective will not allow. One redline could be that no access to data be granted to union busting firms, their intermediaries, or known partners. Another redline could disallow predictive analytics. The Governing Body could be tasked with the responsibility of ensuring that these redlines are respected. It will also be necessary to have internal methods of redress in place to ensure that the data collective is complying with the given aims and purposes.

To hold the various trusts accountable to the law, a public regulator tasked to oversee some aspects of data trusts must be established. This authority should have a dispute resolution mechanism to resolve issues within the data collective, such as a breach of rules and redlines. It also should have a data collective auditing mandate, and a law enforcement obligation. For data collectives with a transnational membership, the auditing and enforcement authority should be transnational and consist of national authorities. Here we can draw inspiration from the European Data Protection Board21 – an independent data protection authority whose purpose is to ensure consistent application of the GDPR. The International Labour Organization (ILO) would be a natural home for such an independent body.

The benefits of collectivizing data

In the above, we have established a two-step process towards empowering workers across the world in the digital economy. We need stronger workers’ rights to data and sound structures that will allow us to collectivize that data. To realize these benefits, behavioral, legal, and technical changes will need to be made. We will need to overcome our own lethargy, form new habits, establish new laws and new authorities at the national and global level. We will need new governance structures, technological solutions for secure data portability,22 and conscious choices about which collectives we will entrust with our data. These are daunting requirements. So what are the benefits?

To begin with, this will allow us to create an alternative digital economy where data is regarded as an infrastructure similar to roads, railway lines, water supplies, and energy. We will vastly reduce Big Tech’s control over our minds, emotions, actions – past and future. We might well succeed in actualizing Shoshana Zuboff’s demand that human futures markets be made illegal. We will ensure that information that is ours becomes responsibly useful to us. Trade unions across the world will get an additional and timely purpose, and we could expect greater mobilization towards this. We will undo the colonizing effects of the current e-commerce discussions and the skewed digital hegemonies and support, not hinder, the development of empowering digital transformations.

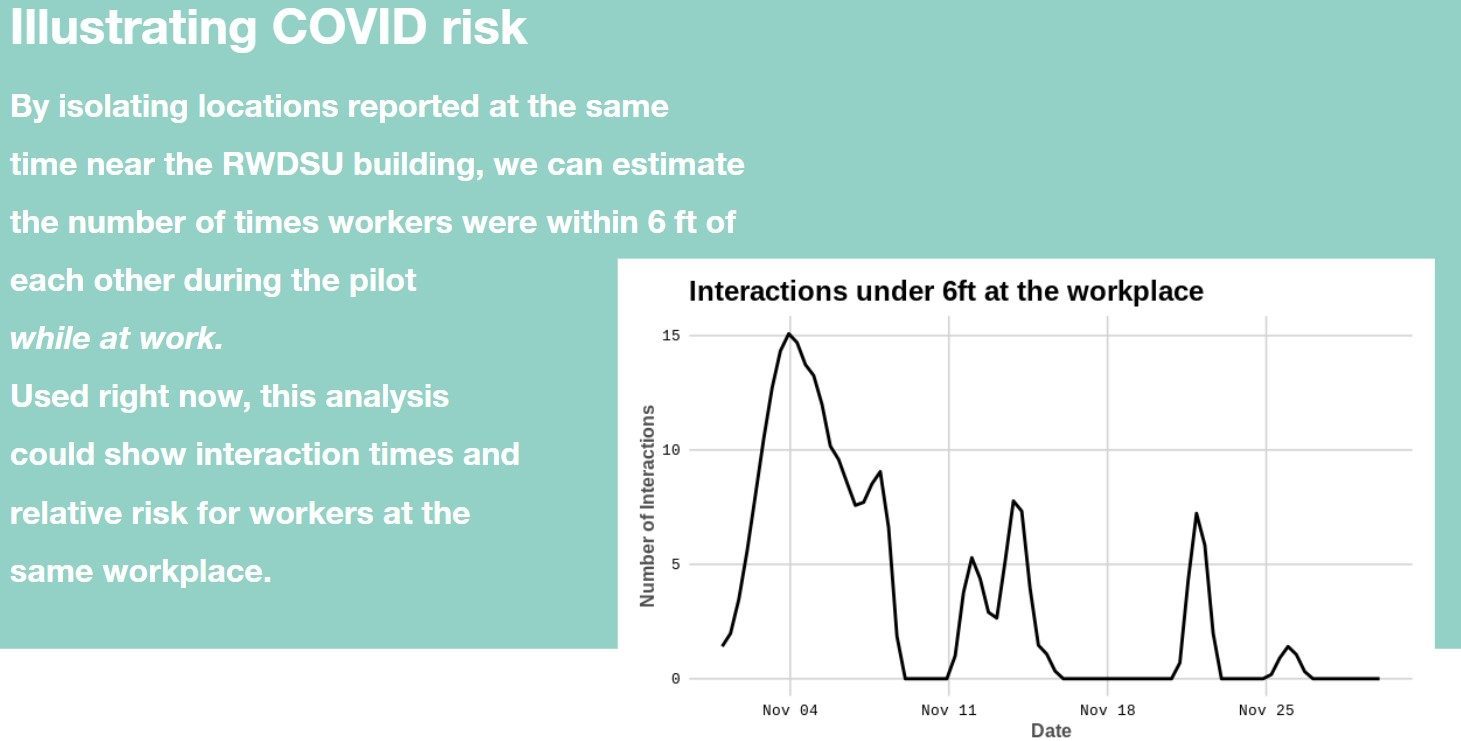

On a more practical level, we will pool resources such that we actually have access to persons with the skills and knowledge to protect our data on the one hand, and analyze it to our benefit, on the other. Digital storytelling and visualizations are a powerful means to campaign for change. At the MIT Media Lab, Dan Callaci analyzed and compared data from a WeClock trail in New York to show how often, on the same day, workers were within six feet of one another (see figure 2 below). Used in the context of the pandemic, this could show the relative risk for workers at the workplace.

Figure 2: Dan Callaci’s analysis of workers’ data from a WeClock trail in New York.

The benefits do not stop there. The Workers’ Data Collective, like Driver’s Seat, could be used to test and challenge corporate algorithms. It will empower us as individuals and communities if we know who has our data and for what purpose(s).

The data collective could democratize the digital economy and empower workers to form and shape the world of work, advocate for regulatory change, and find remedies for persistent injustices. This will allow us to stop being “users” of digital technology, steered, controlled, and manipulated by algorithms and, instead, reclaim our humanity. This includes, not least, our human rights, our freedom of association, assembly, expression, thought, belief, and opinion.

Many data protection regulations across the world, even those aimed exclusively at consumers, are weak. We must fight for a digital ethos that is responsible and puts our rights above profit-seeking surveillance tools and predictive analytics. In the world of work, unions must be the guardians of this alternative ethos, and themselves become stewards of good data governance. Here an ILO Convention advocating for workers’ data rights will not only be an act of solidarity with workers in weaker institutional environments, but also a necessary step to prevent digital colonialism.

By negotiating the data life cycle, unions and workers will become much more familiar with, and insightful about, the potentials and challenges as well as the power of digital tools. For our ultimate aim of creating worker-owned and run Data Collectives, unions need to embrace this learning.

Existing power asymmetries will only widen if workers and their unions do not build capacity in the fields of data, algorithmic systems, and their governance. By negotiating the data life cycle, unions and workers will become much more familiar with, and insightful about, the potentials and challenges as well as the power of digital tools. For our ultimate aim of creating worker-owned and run data collectives, unions need to embrace this learning. Unions must work together smartly to build capacity.

Furthermore, by extending these rights across all parts of the value or supply chain, all workers and countries will be able to develop their own digital transformations without a priori being stripped of the ability to localize their data due to trade agreements.

I understand if you are now thinking: where do we start, and who will get the ball rolling? Here, we may be in luck as help is at hand. Let’s turn to the credit unions.

Credit unions as workers’ data collectives?

A 2019 white paper ‘Data Cooperatives: Digital Empowerment of Citizens and Workers’ that I co-authored, explored the existing trust relations between credit unions and their members. Credit unions act as fiduciaries towards their members and, in some constituencies, are already chartered to securely manage their members’ digital data as well as to represent them in a wide variety of financial transactions, including insurance, investments, and benefits.

Over 100 million people are members of a credit union in the United States today. Over 6 million in Europe.23 Worldwide there are over 57,000 credit unions in 105 countries representing 217 million members. 24

In Europe, many trade unions owned or controlled credit unions at least up until the global financial crisis. In the UK, the financial crisis and the consequential social and economic dismay renewed interest from the union movement to support and establish credit unions. 25 Could credit unions be revitalized as Workers’ Data Collectives?

In our white paper, we argue:

“…it is technically and legally straightforward to have credit unions hold copies of all their members’ data, to safeguard their rights, represent them in negotiating how their data is used, to alert them to how they are being surveilled, and to audit the companies using their members’ data.”

Many trade union-associated credit unions share the same membership pool. For those that do, the institutional structure, the fiduciaries, and the size to start building a Workers’ Data Collective will already be in place.

Psst… It’s not only about workers

To emphasize, my vision is that many such data collectives will simultaneously exist. For workers and for many others. You, as an individual, can choose to pool your data into one or several data collectives. One might be a local collective for your community promoting climate sustainability. Another could be for health data with the goal to improve diagnosis and treatments. Some might prefer a capital gains trust, or one with the mission to improve competitiveness in local and regional markets. The point is, we will share our data with collectives which are trustworthy and whose aims and purposes align with ours.

This can, rightly, result in a battle of ideologies, but that, in turn, could enhance democracy and democratic participation. The task will be to avoid false inflation of individual data collectives. For this, we need the audit trail and authorities mentioned above. We will certainly also need public and transparent governance reporting as well as new laws and enforcement authorities. But with the Covid-19 pandemic wreaking havoc on our societies, economies, and health, we have the opportunity to think bold, think big, and change the very destructive path we are heading down.

End reflections

This essay has presented a multi-step vision for a Digital New Deal for workers and for citizens. Fixing data and privacy rights is not an end in itself. We will need to draw a new map for the digital economy and society. We will need to demand from our politicians that they think big – constructively. The current exploitation by Big Tech is not a fad. It won’t go away unless forced to by law. The vision outlined above, is neither utopian nor unattainable. But it will require responsible and dedicated actions on our side. Now.

Notes

- 1 https://unctad.org/en/PublicationsLibrary/der2019_en.pdf.

- 2 https://www.weforum.org/agenda/2020/04/coronavirus-covid-19-pandemic-digital-divide-internet-data-broadband-mobbile/.

- 3 https://globalmedia.mit.edu/2020/04/21/the-rise-and-fall-and-rise-again-of-facebooks-free-basics-civil-and-the-challenge-of-resistance-to-corporate-connectivity-projects/.

- 4 https://www.amnesty.ch/de/themen/ueberwachung/dok/2019/ueberwachung-durch-facebook-und-google/191121_rapport_fb_google_surveillance-docx.pdf.

- 5 https://www.aljazeera.com/indepth/opinion/digital-colonialism-threatening-global-south-190129140828809.html.

- 6 https://www.oecd.org/finance/Chinas-Belt-and-Road-Initiative-in-the-global-trade-investment-and-finance-landscape.pdf.

- 7 https://arxiv.org/pdf/1904.02095.pdf.

- 8 Jon Shields. 2018. Over 98% of Fortune 500 Companies Use Applicant Tracking Systems (ATS). https://www.jobscan.co/blog/fortune-500-use-applicanttracking-systems/.

- 9 https://www.digitalhrtech.com/artificial-intelligence-talent-acquisition/.

- 10 https://hbr.org/2020/05/how-to-monitor-your-employees-while-respecting-their-privacy.

- 11 https://uk.pcmag.com/cloud-services/92098/the-best-employee-monitoring-software.

- 12 https://www.mintz.com/insights-center/viewpoints/2826/2019-10-california-consumer-privacy-act-brief-guide-covered.

- 13 https://leginfo.legislature.ca.gov/faces/billTextClient.xhtml?bill_id=201920200AB25.

- 14 https://ec.europa.eu/justice/article-29/documentation/opinion-recommendation/files/2001/wp48_en.pdf.

- 15 https://gdpr-info.eu/recitals/no-43/.

- 16 https://www.rosalux.eu/kontext/controllers/document.php/528.5/c/01b5be.pdf.

- 17 http://curia.europa.eu/juris/document/document.jsf;jsessionid=CF8C3306269B9356ADF861B57785FDEE?text=&docid=228677&pageIndex=0&doclang=EN&mode=req&dir=&occ=first&part=1&cid=9812784 .

- 18 https://theodi.org/wp-content/uploads/2019/04/General-legal-report-on-data-trust.pdf.

- 19 For example, International Data Privacy Law, Volume 9, Issue 4, November 2019, Pages 236–252, https://doi.org/10.1093/idpl/ipz014.

- 20 https://www.gq.com/story/wage-theft.

- 21 https://edpb.europa.eu/edpb_en.

- 22 The MIT Trust Data Consortium has developed a tool for this https://trust.mit.edu/.

- 23 http://www.creditunionnetwork.eu/cus_in_europe.

- 24 http://www.creditunionnetwork.eu/cus_globally.

- 25 https://www.researchgate.net/publication/326518987_Small_is_Beautiful_Exploring_the_Challenges_Faced_by_Trade_Union_Supported_Credit_Unions.

Dr Christina J Colclough is an advocate for the worker’s voice. She has been involved in regional and global trade labor movements, where she has been responsible for future of work policies, advocacy, and strategies. Christina now supports a wide range of progressive governments and organizations in their digital capacity building and transformation. She was named one of the world’s most influential women on the Ethics of AI in 2019. Contact and more information on: www.thewhynotlab.com. Find her on Twitter @CjColclough